Why Evaluate?

Why Agent Evaluation Matters

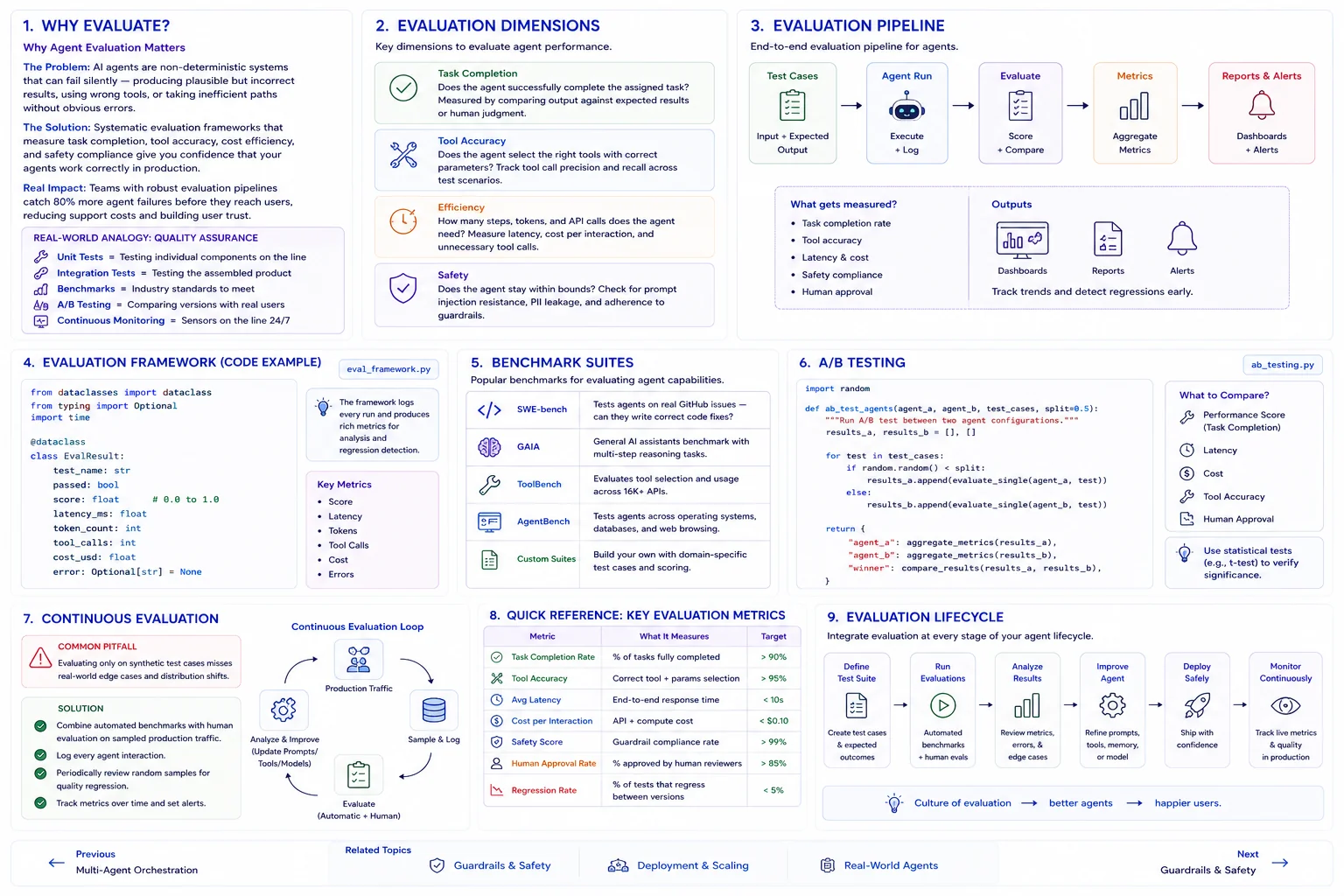

The Problem: AI agents are non-deterministic systems that can fail silently -- producing plausible but incorrect results, using wrong tools, or taking inefficient paths without obvious errors.

The Solution: Systematic evaluation frameworks that measure task completion, tool accuracy, cost efficiency, and safety compliance give you confidence that your agents work correctly in production.

Real Impact: Teams with robust evaluation pipelines catch 80% more agent failures before they reach users, reducing support costs and building user trust.

Real-World Analogy

Think of agent evaluation like quality assurance in manufacturing:

- Unit Tests = Testing individual components on the assembly line

- Integration Tests = Testing the assembled product end-to-end

- Benchmarks = Industry standards the product must meet

- A/B Testing = Comparing two product versions with real customers

- Continuous Monitoring = Quality sensors on the production line 24/7

Evaluation Dimensions

Task Completion

Does the agent successfully complete the assigned task? Measured by comparing output against expected results or human judgment.

Tool Accuracy

Does the agent select the right tools with correct parameters? Track tool call precision and recall across test scenarios.

Efficiency

How many steps, tokens, and API calls does the agent need? Measure latency, cost per interaction, and unnecessary tool calls.

Safety

Does the agent stay within bounds? Check for prompt injection resistance, PII leakage, and adherence to guardrails.

Evaluation Metrics

from dataclasses import dataclass

from typing import Optional

import time

@dataclass

class EvalResult:

test_name: str

passed: bool

score: float # 0.0 to 1.0

latency_ms: float

token_count: int

tool_calls: int

cost_usd: float

error: Optional[str] = None

def evaluate_agent(agent, test_cases: list[dict]) -> list[EvalResult]:

results = []

for test in test_cases:

start = time.time()

try:

output = agent.run(test["input"])

latency = (time.time() - start) * 1000

# Score the output

score = score_output(output, test["expected"])

results.append(EvalResult(

test_name=test["name"],

passed=score >= test.get("threshold", 0.8),

score=score,

latency_ms=latency,

token_count=output.usage.total_tokens,

tool_calls=len(output.tool_calls),

cost_usd=calculate_cost(output.usage),

))

except Exception as e:

results.append(EvalResult(

test_name=test["name"], passed=False,

score=0.0, latency_ms=0, token_count=0,

tool_calls=0, cost_usd=0, error=str(e),

))

return resultsBenchmark Suites

Popular Agent Benchmarks

- SWE-bench: Tests agents on real GitHub issues -- can they write correct code fixes?

- GAIA: General AI assistants benchmark with multi-step reasoning tasks

- ToolBench: Evaluates tool selection and usage across 16K+ APIs

- AgentBench: Tests agents across operating systems, databases, and web browsing

- Custom suites: Build your own with domain-specific test cases and scoring

A/B Testing

import random

def ab_test_agents(agent_a, agent_b, test_cases, split=0.5):

"""Run A/B test between two agent configurations."""

results_a, results_b = [], []

for test in test_cases:

if random.random() < split:

results_a.append(evaluate_single(agent_a, test))

else:

results_b.append(evaluate_single(agent_b, test))

return {

"agent_a": aggregate_metrics(results_a),

"agent_b": aggregate_metrics(results_b),

"winner": compare_results(results_a, results_b),

}Continuous Evaluation

Common Pitfall

Problem: Evaluating only on synthetic test cases misses real-world edge cases and distribution shifts.

Solution: Combine automated benchmarks with human evaluation on sampled production traffic. Log every agent interaction and periodically review random samples for quality regression.

Quick Reference

| Metric | What It Measures | Target |

|---|---|---|

| Task Completion Rate | % of tasks fully completed | > 90% |

| Tool Accuracy | Correct tool + params selection | > 95% |

| Avg Latency | End-to-end response time | < 10s |

| Cost per Interaction | API + compute cost | < $0.10 |

| Safety Score | Guardrail compliance rate | > 99% |

| Human Approval Rate | % approved by human reviewers | > 85% |

| Regression Rate | % of tests that regress between versions | < 5% |